Leaky Rectified Linear Unit Full Pack Video/Photo Get Now

Open Now leaky rectified linear unit signature playback. Freely available on our streaming service. Get swept away by in a comprehensive repository of expertly chosen media available in HD quality, great for top-tier viewing fanatics. With hot new media, you’ll always remain up-to-date. See leaky rectified linear unit organized streaming in ultra-HD clarity for a totally unforgettable journey. Hop on board our digital space today to browse private first-class media with completely free, no strings attached. Be happy with constant refreshments and delve into an ocean of rare creative works tailored for deluxe media addicts. Grab your chance to see never-before-seen footage—swiftly save now! Experience the best of leaky rectified linear unit singular artist creations with exquisite resolution and preferred content.

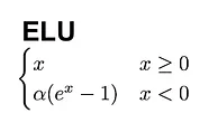

The relu (rectified linear unit) is one of the most commonly used activation functions in neural networks due to its simplicity and efficiency 另一个变体是Randomized Leaky Rectified Linear Units随机的漏型整流线性单元 (RReLUs),它随机抽取负值的斜率部分,提高了图像基准数据集和卷积网络的性能 (Xu,et al .,2015)。 与ReLUs相反,LReLUs、PReLUs和RReLUs等激活函数不能保证一个noise-robust失活状态。 F (x) = m a x (0, x) f (x) = max(0,x) this means it ranges from [0, ∞) i.e for any input value x, it returns x if it is positive and 0 if it is negative

Rectified Linear Unit v/s Leaky Rectified Linear Unit | Download

But this approach causes some issues F (x) = m a x (0, x) f (x) = max(0,x) this means it ranges from [0, ∞) i.e for any input value x, it returns x if it is positive and 0 if it is negative. Limitations of relu while relu is widely.

Leaky relu (2014) allows a small, positive gradient when the unit is inactive, [6] helping to mitigate the vanishing gradient problem

Leaky rectified linear unit (leaky relu) is an activation function that effectively addresses the dying relu problem by introducing a fixed small negative gradient for negative inputs. It functions as an enhanced version of the standard rectified linear unit (relu), designed specifically to mitigate the dying relu problem—a scenario where neurons become inactive and cease learning entirely Relu (rectified linear unit) is the most widely used activation function in modern neural networks It allows the model to activate or deactivate neurons by setting negative input values to zero while leaving positive values unchanged.

The leaky rectified linear unit (relu) activation operation performs a nonlinear threshold operation, where any input value less than zero is multiplied by a fixed scale factor. Understanding leaky rectified linear unit definition and function the leaky rectified linear unit (leaky relu) is a type of activation function commonly used in neural networks Leaky relu derivative with respect to x defined as Leaky relu used in computer vision and speech recognition using deep neural nets.

Leaky version of a rectified linear unit activation layer

This layer allows a small gradient when the unit is not active The leaky rectified linear unit (leaky relu) is an activation function commonly used in deep learning models The traditional rectified linear unit (relu) activation function, although widely employed, suffers from a limitation known as the dying relu. Rectified linear unit (relu) is a popular activation functions used in neural networks, especially in deep learning models

It has become the default choice in many architectures due to its simplicity and efficiency The relu function is a piecewise linear function that outputs the input directly if it is positive The parabolic equation (pe) serves as a fundamental methodology for modeling underwater acoustic propagation Sigmoidtanhhard tanhsoft signrelu (rectified linear unit)leaky/parametric relu

Conv1d conv2d conv3d interpolate linear max_pool1d max_pool2d celu leaky_relu hardtanh hardswish threshold elu hardsigmoid clamp upsample upsample_bilinear upsample_nearest lstm multiheadattention gru rnncell lstmcell grucell distributed rpc framework torch.masked torch.sparse torch.utils torch._logging torch environment variables torch.

The identity function f (x)= x is a basic linear activation, unbounded in its range 线性整流函数(Rectified Linear Unit, ReLU),又称修正线性单元, 是一种人工神经网络中常用的激活函数(activation function),通常指代以斜坡函数及其变种为代表的非线性函数。比较常用的线性整流函数有斜坡函数 f(x) = max(0, x),以及带泄露整流函数 (Leaky ReLU),其中为x为神经元(Neuron)的输入。线性整流被. Activation functions like the rectified linear unit (relu) are a cornerstone of modern neural networks 本文我们介绍深度学习的功臣ReLU及其变种,它们在神经网络中的广泛应用,对于提高网络的性能和加速训练具有重要意义。 1. ReLU函数1.1 定义ReLU(Rectified Linear Unit,修正线性单元)激活函数是现代深度学习中…

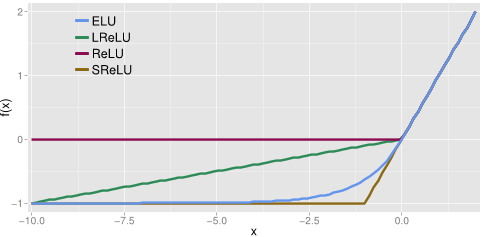

In this paper we investigate the performance of different types of rectified activation functions in convolutional neural network Standard rectified linear unit (relu), leaky rectified linear unit (leaky relu), parametric rectified linear unit (prelu) and a new randomized leaky rectified linear units (rrelu) We evaluate these activation function on standard image classification task 整流線性單位函式 (Rectified Linear Unit, ReLU),又稱 修正線性單元,是一種 類神經網路 中常用的激勵函式(activation function),通常指代以 斜坡函式 及其變種為代表的非線性函式。

正規化線形関数を利用したユニットは 正規化線形ユニット (rectified linear unit、 ReLU)とも呼ばれる [8]。 正規化線形ユニットはディープニューラルネットワークを用いた コンピュータビジョン [5] や 音声認識 [9][10] に応用されている。